Donald Bailey

- BE(Hons) in Electrical Engineering, University of Canterbury, NZ

- PhD, Electrical and Electronic Engineering. University of Canterbury

- Teaching, School of Engineering and Advanced Technology, Massey University

- Image and video processing

- Embedded systems

- Digital signal processing

- Digital electronics

- Advanced computer engineering

- Major leader for BE (Electronics and Computer Engineering) programme

- Author of "Design for Embedded Image Processing on FPGAs" (2011 - Wiley/IEEE)

- Regularly teach industry workshops on "Image Processing using FPGAs"

- NZ Engineering Excellence Award 2013 (automated digital microscope for palynology)

- Chair, IEEE NZ Central Section

- World Trax champion (1990-1994, 1996-present)

Introduction

Introduction

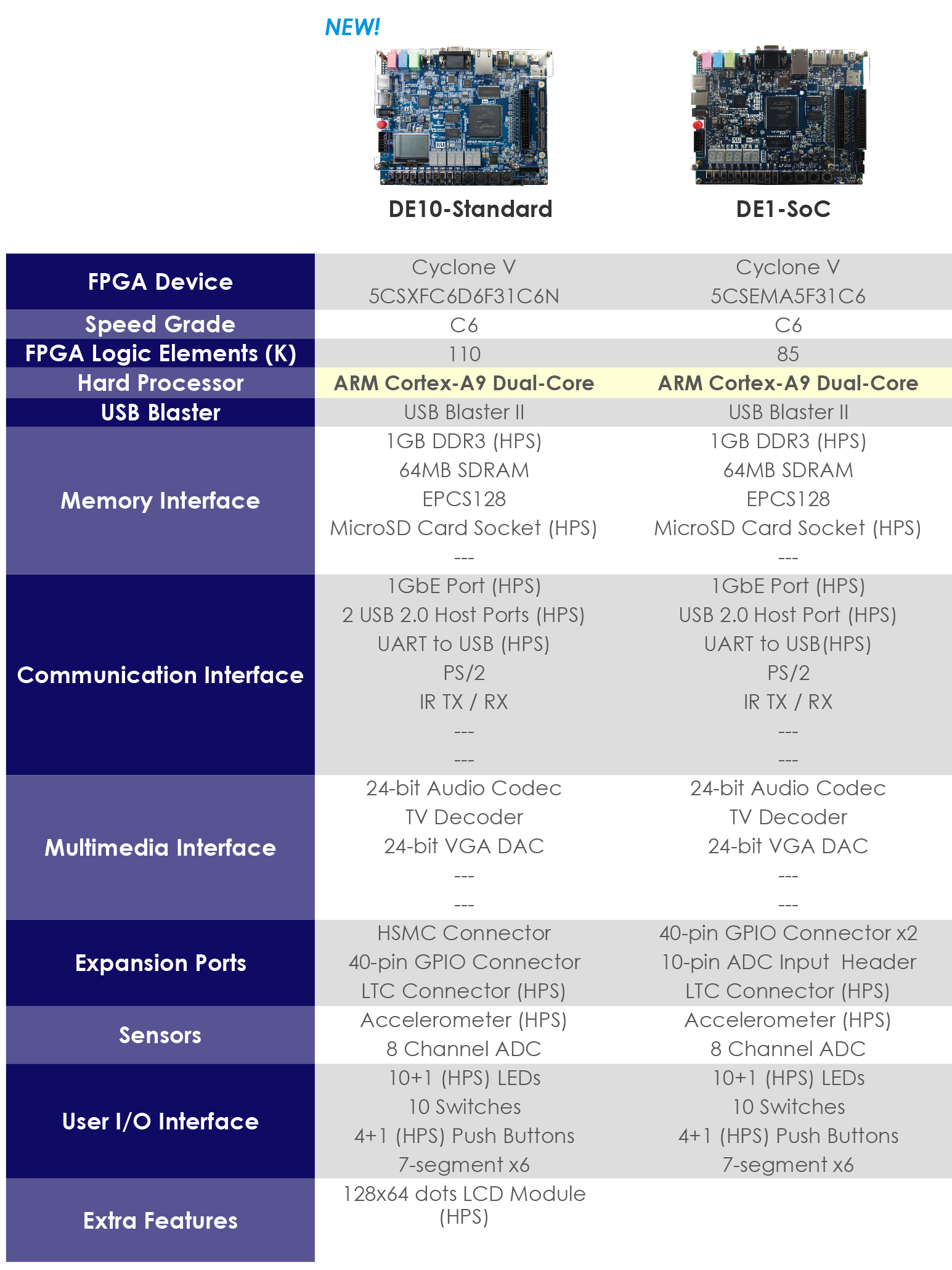

The purpose of this review was to evaluate the new DE10-Standard

board from Terasic in terms of its utility as an entry level platform for both teaching and research in image processing.

For exploring real-time image and video processing, one needs a camera, an FPGA, and display. For the camera, a Terasic TRDB D5M module was used, which plugs directly into the 40-pin expansion header of the DE10-Standard

. I was pleased that the new board did not do away with this connector, as this is the easiest way to directly capture images into the FPGA. A standard computer monitor was connected via the VGA connector of the board.

Resources

Resource-wise, the DE10-Standard

is a step up from the DE1-SoC

. In terms of peripherals, it is much the same, with the addition of the HSMC expansion connector. However, the larger FPGA (110K logic elements compared with 85K for the DE1-Soc) allows more complex image processing problems to be tackled with greater ease.

Resource-wise, the DE10-Standard

is a step up from the DE1-SoC

. In terms of peripherals, it is much the same, with the addition of the HSMC expansion connector. However, the larger FPGA (110K logic elements compared with 85K for the DE1-Soc) allows more complex image processing problems to be tackled with greater ease.

The 553 block memories (each 10 kbits) is a significant (40%) increase on those in the DE1-SoC

. These are sufficient to store 2 full frames at 640x480 resolution, or 1 frame at 800x600.  However this is probably not the best use of this memory, and at 1024x768 resolution or higher there is not enough for storing a full frame. For this reason, I configure the camera to be line synchronous with the display. The timing requirements of the camera mean that it has to operate at a higher clock frequency than the display. This is solved relatively simply by using one of the phase locked loops (PLLs) on the Cyclone V to generate the both clocks, one for the camera and one for the display. The use of the PLL means that the relationship between the two clocks can be controlled to ensure that the camera and display are line synchronous. A single line buffer, constructed from 1 or 2 block memories (depending on the frame width and number of bits per pixel) can be configured as a dual-port memory, enabling pixel data transfer between the two clock domains. This arrangement also allows many operations to be performed on the image (as a pixel stream) directly as it streams from the camera to the display.

However this is probably not the best use of this memory, and at 1024x768 resolution or higher there is not enough for storing a full frame. For this reason, I configure the camera to be line synchronous with the display. The timing requirements of the camera mean that it has to operate at a higher clock frequency than the display. This is solved relatively simply by using one of the phase locked loops (PLLs) on the Cyclone V to generate the both clocks, one for the camera and one for the display. The use of the PLL means that the relationship between the two clocks can be controlled to ensure that the camera and display are line synchronous. A single line buffer, constructed from 1 or 2 block memories (depending on the frame width and number of bits per pixel) can be configured as a dual-port memory, enabling pixel data transfer between the two clock domains. This arrangement also allows many operations to be performed on the image (as a pixel stream) directly as it streams from the camera to the display.

When frame buffering is required, the DE10-Standard

board, like the DE1-SoC

, has a 64 MB DRAM chip. This is enough to hold a significant number of frames, although the bandwidth between the memory and FPGA chip may be the limiting factor in many applications. That said, even at 1024x768 resolution there is sufficient bandwidth to perform both a read and write of 16 bit wide image within the frame period. Again, this requires running the DRAM with a higher frequency clock (I used 100 MHz) and using dual-port block memories to transfer the data between clock domains.

The plentiful block memories make stream-based filters and other related operations straight forward to implement. There is also a 25% increase in number of DSP blocks in the DE10-Standard compared with the DE1-SoC

. Each of the 112 DSP blocks can be used for one 27x27 multiplication, or two 18x18 multiplications, or three 9x9 multiplications. For many image processing applications, this effectively means up to 336 hardware multipliers are available.

In terms of other board resources on the DE10-Standard

and DE1-SoC

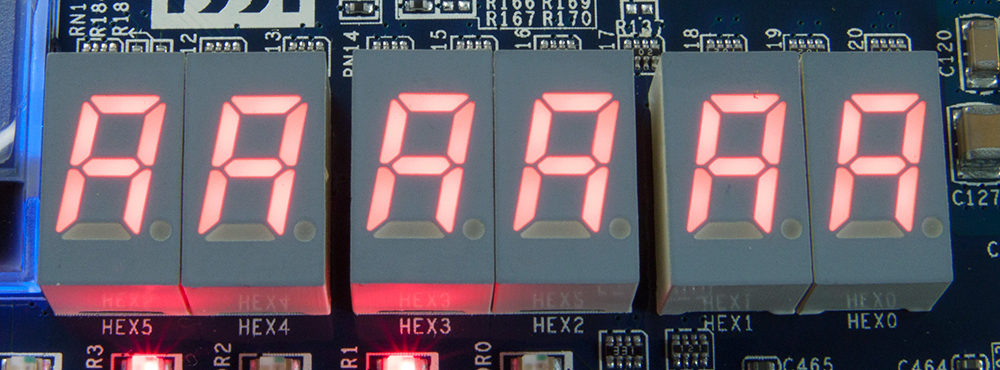

, I have made use of the 7 segment displays, LEDs, the switches and buttons, and the infra-red receiver. The 7 segment displays can be used for providing a small amount of status information. I have used them to give feedback on the exposure, gain, and white balance (when using the FPGA to implement automatic exposure control, and automatic white balancing). I have also used them to give the position of an object when tracking an object. The LEDs can similarly give status information.

In terms of other board resources on the DE10-Standard

and DE1-SoC

, I have made use of the 7 segment displays, LEDs, the switches and buttons, and the infra-red receiver. The 7 segment displays can be used for providing a small amount of status information. I have used them to give feedback on the exposure, gain, and white balance (when using the FPGA to implement automatic exposure control, and automatic white balancing). I have also used them to give the position of an object when tracking an object. The LEDs can similarly give status information.

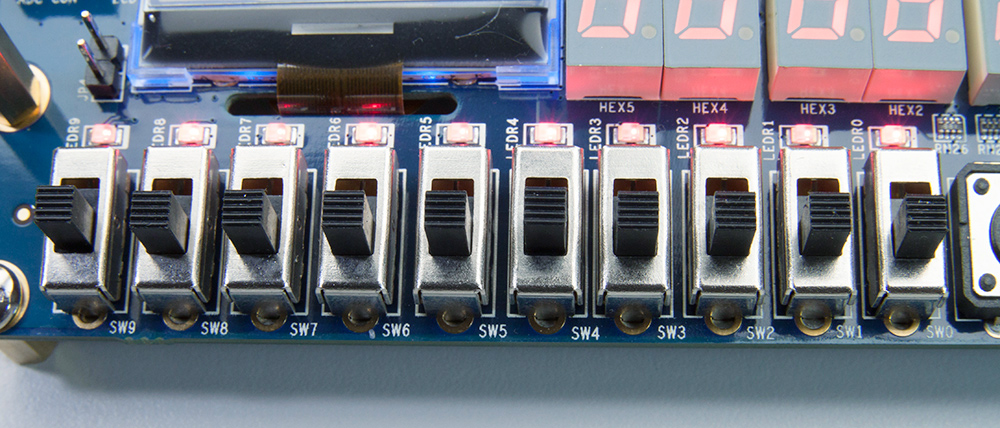

The switches and push buttons are useful for setting one or two variable parameters in an algorithm. Examples include a threshold level, for object or edge detection, or lens distortion parameters. The switches are also helpful for selecting which image is displayed (from within the processing stream) to show the progressive transformations by successive image processing operations. This can be helpful from a teaching point of view, giving a better understanding of the effects of operations, and their interaction within an image processing algorithm. They are also helpful at one level for debugging an algorithm, to identify where errors are being introduced.

The switches and push buttons are useful for setting one or two variable parameters in an algorithm. Examples include a threshold level, for object or edge detection, or lens distortion parameters. The switches are also helpful for selecting which image is displayed (from within the processing stream) to show the progressive transformations by successive image processing operations. This can be helpful from a teaching point of view, giving a better understanding of the effects of operations, and their interaction within an image processing algorithm. They are also helpful at one level for debugging an algorithm, to identify where errors are being introduced.

For larger numbers of parameters, or analysing more complex algorithms, it can be more helpful to have the parameters and selections controlled by a series of registers. For this, the infra-red receiver can be used to provide values for setting those registers.

More in-depth debugging requires instantiating the SignalTap logic analyser (available with the Quartus Prime development tools). Again, the switches come in helpful here for setting variable triggers. One technique I have found useful is to use the switches to select a row number within the image, and use this to provide a trigger for SignalTap. One disadvantage of debugging using SignalTap, especially when using the Quartus Prime Lite edition, is the need to completely recompile the results whenever including additional signals to monitor. Again, this is where having a large number of block memories available is helpful because more signals can be sampled for longer. This reduces the number of recompiles required while tracking down and diagnosing bugs.

When interfacing with analogue video signals, the video decoder on the board is useful.

One of the key differences between the DE10-Standard

and the DE1-SoC

is the replacement of one of the 40 pin expansion headers with an HSMC expansion header. For image and video applications, the primary benefit of this is the ability to interface with other high frequency inputs and outputs, such as the HDMI and CameraLink interface cards.

Most of my work has focussed on low level algorithms in hardware. For a more complete application, the availability of the dual-core ARM processor can be a significant help for higher level processing, and managing communication with other systems. Here the other peripherals enable a high power embedded system to be developed, with the compute-intensive image processing implemented on the FPGA fabric.

Case study

As a case study, I explored implementing a 512x512 two-dimensional FFT at video frame rates (60 frames per second), as the video was streamed from the camera to the display. The 1024x768 display was split in two, with the 512x512 input image from the camera displayed on the left, and the magnitude of the Fourier transform on the right. Since each output of the Fourier transform requires input from each input pixel, the Fourier transform is displayed on the following frame. As a 2D FFT is separable, it was implemented by taking the Fourier transform of each row, then of each column of the result. A pipelined 1D FFT block was constructed using a radix-22 architecture. This enabled the input pixels from the camera to be directly streamed to the FFT. The results were saved in off-chip DRAM. Once the 512 rows have been processed, the image is read in from DRAM by column and the same 1D FFT block performed the FFT on the columns. The results were again stored in DRAM to later be read out by row for display.

One issue with using the discrete Fourier transform is that it implicitly assumes that the image is periodic. This can result in significant cross-shaped artefacts in the frequency domain. A new approach to removing these artefacts is a periodic plus smooth decomposition which separates an image into two components – an artefact free periodic component, and a smooth component which is smooth everywhere except for at the borders of the image. Removing the smooth component removes the border artefacts. Normally, the smooth component would require another 2D FFT to calculate, however some careful mathematical rearranging enables this to be calculated with a single 1D FFT, enabling it to be directly integrated within the original 2D FFT. This design was constructed and tested on the DE10-Standard

board, using only about 6% of the logic resources, 3% of the on-chip memory blocks, and 10% of the DSP blocks (Bailey et al

., 2017).

Summary

Overall, the DE10-Standard

is an excellent platform for prototyping embedded vision algorithms, and is a significant step up on the DE1-SoC

in terms of resources available on the FPGA. For this purpose, the primary platform is a low cost FPGA, and for complete applications, this is usually coupled with an embedded processor. The Cyclone V used on the DE10-Standard

integrates both of these within a single chip. There are plenty of resources available on the FPGA, to enable even complex algorithms to be developed and tested. In particular, the plentiful block memories and logic resources allow the implementation of quite complex designs.

While many of the peripherals of theDE10-Standard

are not necessarily required for image processing, the 40-pin expansion header for connecting the camera, and VGA display connector are essential for on-line real-time processing. The HSMC expansion connector enables higher speed HDMI and CameraLink devices to be connected, opening up these areas on a relatively low cost platform.

The utility of the DE10-Standard

as a platform for basic research on embedded vision has been demonstrated through its use in exploring novel algorithms and architectures within the case study on removing artefacts from FFTs. It would also serve as an ideal base for teaching image processing on FPGAs, with the on-board peripherals (switches, 7 segment displays, IR receiver) making it easy to demonstrate variations of algorithms, or to switch between functionality of different algorithms.

So, in summary, I would recommend the DE10-Standard

to anyone interested in exploring image processing on FPGAs. It is well suited to hobbyists, educators, and researchers looking for a low cost, entry level platform without compromising on resources available for the design.

Bailey, D.G., Mahmood, F. and Skoglund, U. (2017) "Reducing the cost of removing border artefacts in Fourier transforms", to be presented in International Symposium on Highly Efficient Accelerators and Reconfigurable Technologies (HEART 2017),

Bochum, Germany, (7-9 June, 2017).